The securities processing platform

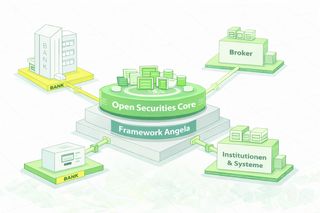

DISACON CORE is built on the ANGELA framework, a proprietary development by DISACON AG. It leverages proven open-source and cloud technologies selected to ensure operational reliability, maintainability, and long-term integrability.

Transactions & Processing

Processing of business events from connected trading and order systems. Validation, enrichment, and hand-off to downstream processes.

Clearing & Settlement

Control of clearing and settlement processes including status tracking, deadlines, and exceptions (including defined reprocessing paths).

Holdings & Corporate Actions

Rules-based processing of corporate actions and follow-up processes on holdings; impacts on holdings and postings are documented and traceable.

Tax & Regulatory Processes

Implementation of tax logic and regulatory requirements along the processing chain. Variants are modeled via configurable rules and parameters.

Backoffice & Operations

Operational workflows for investigation and exception handling. The goal is a consistent view of processes, states, and processing status—both domain and technical.

Platform Concept & Architecture Principles

DISACON CORE is designed so that domain logic remains stable in operation for years, changes can be introduced in a controlled manner, and processing steps are auditable at any time.

Principle: Separation of Responsibilities

Domain rules, process orchestration, persistence, and integration are deliberately decoupled. This allows changes to be introduced in one layer without destabilizing the entire platform.

- Domain Logic: domain rules, validations, exceptions

- Technology: Processing, Persistence, Observability

- Integration: Adapter, APIs, Events, batch connections

- Operations: Deployment, Scalability, Security, Compliance

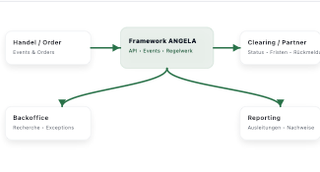

Principle: Event- and Rules-Based Processing

Processes are modeled as chains of traceable processing steps. Triggers can be events (e.g., status changes), time windows (batch), or external feedback (clearing/settlement, custodian).

- Event-driven: asynchronous processing and loose coupling

- Batch-capable: processing in defined time windows (e.g., end-of-day runs)

- Rules-based: domain variants without deep code changes

Principle: Auditability and Reproducibility

For regulated processes, it is crucial that results are explainable. DISACON CORE aims to make every processing step traceable from a domain and technical perspective (audit trail), including the rules used, input parameters, and relevant state changes.

This enables analysis, troubleshooting, evidence, and long-term historical evaluations—even over extended periods.

Technical Modules

The technical architecture ensures that domain logic is implemented in a scalable, traceable, and operationally stable way.

Processing Engine (Orchestration)

Processing is modeled as a sequence of deterministic steps. Each step has defined inputs, outputs, and error paths.

- Step chains (pipelines) instead of ad-hoc logic

- Idempotency for robust retries

- Explicit exception and reprocessing paths

Rule Engine (fachliche Varianten)

Rules and variants are maintained and versioned in a traceable way. Changes can be rolled out in a controlled manner and are unambiguously attributable to results.

- Rule versioning & approval processes

- Rule context (validity, institution, product, time)

- Transparent evaluation (explainability)

Event & Data Layer (Persistence)

States, movements, and status changes are persisted consistently. Historization enables traceability, reproduction, and long-term evaluations.

- Transactional persistence for consistent results

- History and correlation across processes

- Audit trail for evidence and audit matters

Integration Layer (Interfaces & Adapters)

Integration via APIs, events, or file-based methods. Adapters decouple customer-specific formats from the core.

- API- and event-based integration

- Batch/file interfaces if required

- Adapter concept for proprietary formats

Observability & Audit

Monitoring of throughput, latency, and error rates, as well as a domain-level process view (correlation), supports operations, reconciliation, and evidence.

- Monitoring and alerting

- Domain and technical logging

- Audit trail for investigations and audit

Engineering principle: AI-assisted implementation

We use AI deliberately as a tool to support development, documentation, and test automation. This accelerates delivery and quality assurance.

- Frontend & UI: support for component development and reusability

- Documentation: faster creation and consistent technical descriptions

- Tests: AI-assisted generation and extension of test cases

- Quality: higher test coverage and more stable releases with short iteration cycles

Integration

DISACON CORE integrates into existing system landscapes. A typical approach is a phased rollout per process or product area, complemented by controlled migration and reconciliation.

Typical Surrounding Systems

- OMS / Trading: order creation, routing, execution

- Core banking / customer management: Customer master data, account logic

- Custodians: settlement, holdings confirmations, corporate actions

- Reporting: regulatory and operational reports

- Data/BI: DWH, Analytics, Long-term evaluations

Rollout patterns

- Parallel Operation: coexistence for defined periods

- Stepwise Migration: introduction along clear process boundaries

- Controlled Cutover: cutover with evidence and reconciliation phases

The integration concept separates customer-specific formats from core processes. This keeps the platform maintainable even as surrounding systems change.

DISACON CORE is integrated as an open core into existing system landscapes: trading/order systems deliver events, downstream systems consume status updates, vouchers/records, and domain outputs.

Systems & data flows

Integration Principles

Loose coupling via events, stable APIs for synchronous access and clear versioning for partner integrations. Domain decisions are made via rule sets/parameters rather than hardcoding.

Audit & Reprocessing

Status changes, deadlines, and exceptions are modeled as traceable processing steps. Reprocessing paths are explicit so corrections remain reproducible and auditable.

Operations & cloud architecture

The platform is designed for operation in cloud environments. Architectural decisions consider scalability, security, clear responsibilities, and evidence-ready operations in regulated contexts.

Operationally Relevant Characteristics

- Scalability: horizontal scalability of processing components

- Resilience: decoupled components, robust repeatability, controlled error paths

- Multi-tenancy: separation of data, configuration, and operational parameters

- Security: least privilege, segmentation, traceable changes

Deployment and operating models

Depending on the organization, different models are possible—from fully managed operation to joint operation or self-operated with support.

- Managed Operation: operated by DISACON incl. monitoring, updates, security

- Enablement Operation: self-operation (fully/partly), DISACON supports setup and operations

AWS Architecture (abstracted)

The target AWS architecture separates processing, persistence, integration, and observability. Specific AWS services depend on the setup (tenant isolation, network segmentation, security requirements).

- Network & Segments: clear separation of public/private areas

- Compute: scalable workloads for processing

- Data: consistent persistence and historization

- Observability: Metrics, logs, traces, domain correlation

Environments

DISACON CORE is operated across separated environments to test changes in a controlled manner and promote releases safely into production.

- Separation by stages: separate accounts/namespaces for DEV, UAT, and PROD

- Release mechanism: promoted artifacts (builds) instead of manual interventions

- Traceability: clear versioning and reproducible deployments

- Stability: minimized risk through controlled transitions between environments

Overview of the central architecture building blocks and cloud infrastructure: system structure, platform core, data storage, integration of external systems, and AWS operations incl. CI/CD, security, and traceability.

How is the system structured at a high level?

Which domain modules does the platform core cover?

- Account management

- Custody management

- Order Management

- Payment processing

- Securities processing

- Taxes and regulation

- Reporting

- Backoffice Operations

Publicly we show the capability view. The internal service decomposition is part of the technical documentation.

What is the ANGELA framework?

Which data is stored where?

How are external systems connected?

Concrete field mappings and partner formats are project-specific and are maintained in the implementation documentation.

Which user groups and clients exist?

What does the AWS cloud infrastructure look like?

How do CI/CD and deployment work (multiple AWS accounts)?

Details such as concrete pipeline stages, branch policies, and deployment strategies are configured per environment.

How are security and traceability addressed?

- Ingress via defined gateways (CDN, API Gateway, load balancer)

- Authentication/authorization via OIDC/IAM

- Historization and correlation of processing steps for auditability

- Monitoring/logging and archiving (CloudWatch, S3 log archive)

Customer-specific: tenant isolation, encryption (KMS), backup/DR, network segments, and security controls.

Can the solution be scaled efficiently?

Our architecture enables stepwise scaling up to 10,000% while keeping base costs constant. Large volumes at predictable costs—without cost explosion as you grow.

Why Erlang?

Erlang was designed specifically for highly available, fault-tolerant, and scalable systems—originally in telecommunications, where billions of messages are processed in real time. These properties are essential for maximum reliability.

Why business logic directly in the database?

By placing the central logic directly in the database, we avoid complex middleware, reduce sources of error, and significantly accelerate processing. The result: traceable processes and maximum performance without unnecessary intermediate layers.

Are these proven technologies?

We rely on a proven architecture with modern technology stacks that have already demonstrated themselves in highly sensitive applications such as WhatsApp or Klarna.

How is performance with increasing volumes?

Even with millions of assets per day, processing time per transaction is only 10 milliseconds—even under high load.

Is DISACON CORE tied to a specific cloud provider?

DISACON CORE is operated on hyperscaler infrastructure in the cloud. The platform is designed so that, in the future, operation can also be implemented in multi-cloud or data-center environments without replacing the solution’s core.

Is tax calculation integrated in DISACON CORE?

Yes. DISACON CORE includes integrated tax processing for capital income for the German market. The tax logic is part of the processing chain and can therefore be embedded directly into end-to-end processing.

Can an external tax engine be connected (e.g., SECTRAS)?

Yes, in principle this is possible. DISACON CORE can be integrated so that an external tax logic—such as SECTRAS by cpb—is used as part of the end-to-end processing chain. In-depth domain and technical alignment has already been carried out on how an external tax engine can be cleanly integrated into the existing process logic. A production connection is typically a separate integration project.

Is there a certification for the system?

Yes. We are currently working together with Deloitte on an IT audit according to IDW PS 860 with a focus on the GoBD requirements for the ANGELA framework of the DISACON CORE processing platform. Completion of the audit and provision of the audit report are currently expected by the end of the first quarter (Q1).

How is tenant isolation implemented in DISACON CORE?

Tenant isolation at DISACON is implemented at the infrastructure level. Each tenant receives a fully separated operating environment with clear technical isolation. With cloud operations, this separation can be implemented particularly efficiently and cost-effectively. Provisioning is fully automated via infrastructure as code, enabling new tenant environments to be delivered and operated quickly, securely, and in a standardized manner.

Is operation as a custodian bank possible?

Yes. DISACON CORE can also be used in the context of a custodian bank. The platform supports the required processes and integrations to model custodian-specific requirements and enable operations in an appropriate role setup.

Is white-label operation possible?

White-label is on DISACON’s roadmap and enables operating multiple tenants under your own umbrella. Customers are operated in separate environments with their own data storage, ensuring full data isolation, high performance, and audit-proof processes.

White-label operation for tenants without their own umbrella is generally possible and can be realized as part of an individual project. However, this is currently not intended as a standard offering.

Technology Stack

DISACON CORE is based on established technologies and proven tools. The excerpt below shows the most important building blocks; the selection follows the goal of ensuring operational reliability, maintainability, and long-term integrability.

Consistent relational data storage for business data, history, and analytics.

Parallel processing of large data volumes for stable and robust services.

Unified, standardized API descriptions as the basis for stable integrations.

Modern frontend development for stable, reusable UI components.

Cloud infrastructure and operational foundation for scalable deployments, security, and observability.

Transparency instead of lock-in

The stack is chosen so that platform operations and further development remain predictable: clear interfaces, traceable changes, and the ability to operate the platform in other cloud or data-center environments as well, without replacing the overall core.

Amazon Web Services, AWS and the corresponding logos are trademarks of Amazon.com, Inc. or its affiliates. All mentioned trademarks and logos are the property of their respective owners and are used solely to describe the technologies employed.